SparkContext available as sc, HiveContext available as sqlContext. If Spark has been successfully installed, you should see the following output (with informational logging messages omitted for brevity): Welcome to Open the PySpark shell by running the pyspark command from any directory (as you’ve added the Spark bin directory to the PATH).

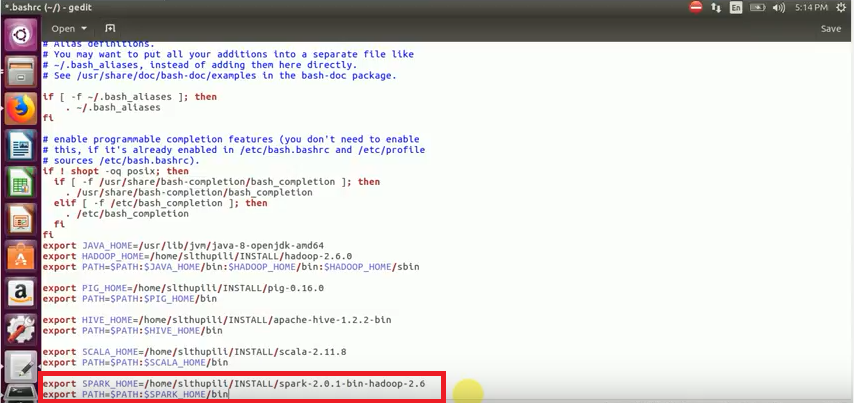

You need to do this if you wish to persist the SPARK_HOME variable beyond the current session. bashrc file or similar user or system profile scripts. The SPARK_HOME environment variable could also be set using the.

Sudo mv spark-1.5.2-bin-hadoop2.6 /opt/spark OpenJDK 64-Bit Server VM (build 24.91-b01, mixed mode)Įxtract the Spark package and create SPARK_HOME: tar -xzf spark-1.5.2-bin-hadoop2.6.tgz

INSTALL SPARK UBUNTU 14.04 INSTALL

If Java 1.7 or higher is not installed, install the Java 1.7 runtime and development environments using the OpenJDK yum packages (alternatively, you could use the Oracle JDK instead): sudo yum install java-1.7.0-openjdk java-1.7.0-openjdk-develĬonfirm Java was successfully installed: $ java -version However, the same installation steps would apply to Centos distributions as well.Īs shown in Figure 3.1, download the spark-1.5.2-bin-hadoop2.6.tgz package from your local mirror into your home directory using wget or curl. In this example, I’m installing Spark on a Red Hat Enterprise Linux 7.1 instance. Try it Yourself: Install Spark on Red Hat/Centos